In the high-pressure environments of aviation and military command, we are obsessed with data. We track flight hours, sortie rates, and G-tolerance. We pride ourselves on making “objective” decisions. Yet, when it comes to the most complex system in the inventory—the human being—we often revert to a primitive, low-fidelity method of evaluation: the snapshot.

In the U.S. Air Force, these snapshots are our performance reports. When a developmental board spends mere minutes reviewing a twenty-year career, they aren’t seeing the human; they are seeing a thumbnail sketch. As an Aerospace Physiologist, I’ve spent my career studying how the brain processes information under stress. What I’ve realized is that our current evaluation systems are suffering from a systemic “Human Factors” failure.

The Science of the “Quick Look”

When humans are forced to process vast amounts of complex data in short windows of time, they don’t use logic; they use heuristics. Research by Nobel laureate Daniel Kahneman and Amos Tversky (1973) identified the Availability Heuristic, a mental shortcut where we judge the importance of information based on how easily it comes to mind.

If an officer is an “out-of-the-box” thinker—perhaps someone who leads a global professional society or runs a specialized digital platform like Human Factors HQ—that data point becomes the most “available.” To a board member operating under a high cognitive load, this unique trait isn’t seen as a force multiplier. Instead, it is often miscategorized as a “distraction.” This is a classic Fundamental Attribution Error (Ross, 1977). Evaluators attribute a non-traditional profile to a lack of effort toward the primary mission, failing to realize that for the true expert, the external passion is the laboratory where the internal mission is perfected.

The “Average” Label: A History of Miscalculation

History is littered with “average” snapshots of extraordinary people.

Consider General George S. Patton. Before he was the “Old Blood and Guts” who liberated Europe, his career was nearly derailed by his obsession with polo and his perceived “lack of focus” on traditional staff duties. His superiors often viewed his extracurricular tactical writing and sporting pursuits as distractions. They failed to see that polo was where Patton honed his understanding of rapid, mobile warfare (D’Este, 1995).

Similarly, look at the Indian polymath Srinivasa Ramanujan. While working as a lowly clerk in Madras, he was dismissed by many as a “distraction” because his notebooks were filled with complex mathematical theorems that had nothing to do with his accounting duties. It took a high-fidelity look from G.H. Hardy at Cambridge to realize that Ramanujan wasn’t a distracted clerk; he was a generational genius whose “side project” would eventually redefine number theory (Kanigel, 1991).

When a board labels a high-performer as “average,” they are often not scoring the person; they are scoring their own inability to process a High Signal-to-Noise Ratio.

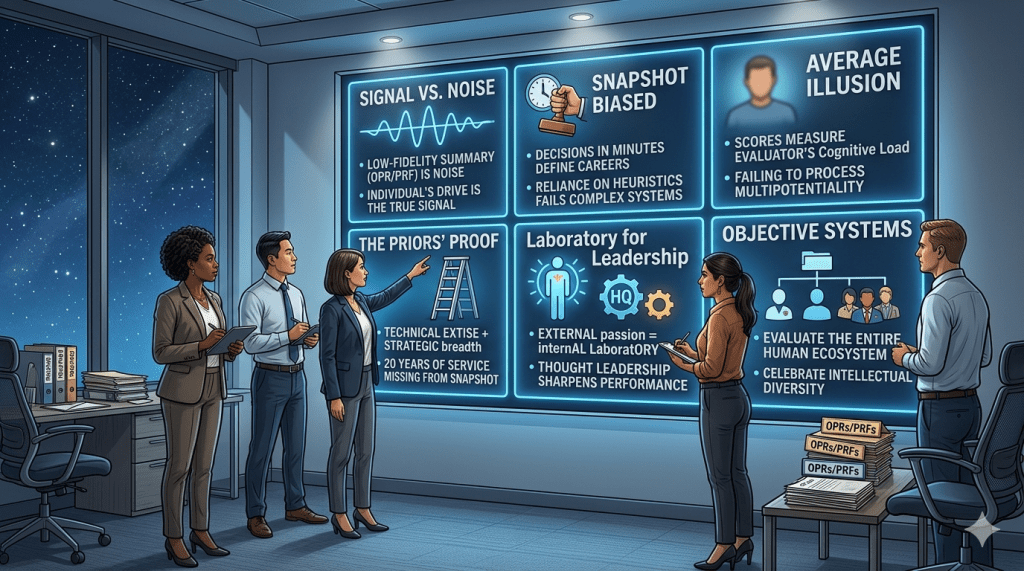

A Human Factors breakdown of the evaluation process. When we rely on low-fidelity snapshots, we risk systemic bias and the devaluation of complex talent.

The Prior-Enlisted Advantage

In my own journey, from a Technical Sergeant and “Technician of the Year” to a Major managing $1.8B in assets across USAFE and NATO, the “signal” has always been my ability to bridge technical expertise with strategic vision. I have overseen the overhaul of training for 20,000 aircrew and led diplomatic health engagements in India, Bulgaria, Chile and Qatar.

Yet, the “snapshot” often misses the Multipotentiality required for these roles. In Human Factors, “fidelity” refers to how closely a representation matches reality. A performance report is a low-fidelity simulation. When evaluators lack the bandwidth to see the “why”—the drive that leads an officer to become a trilingual LEAP member or the President of the Aerospace Physiology Society—they default to a “center-of-the-mass” score. They succumb to the Horns Effect (Thorndike, 1920), where a single misunderstood data point—like a professional blog—shades an entire record of excellence.

The Fatigue of the System

We also have to account for Decision Fatigue. Research shows that as judges or evaluators become tired, they are significantly more likely to default to the “status quo” or the easiest possible answer (Danziger et al., 2011). If you are the 50th record a Colonel has seen that morning, and you don’t look like the 49 records before you, the brain’s survival mechanism is to label you “average” and move on. It is an act of cognitive economy, not an objective truth.

To categorize professional passion as a lack of effort is to misunderstand the nature of modern leadership. My work on Human Factors HQ isn’t a distraction; it is the reason I was able to identify $6.9B in human factor costs for the Department of the Air Force. It is the reason I can brief 18 senior international leaders and find synergy between Danish, Bulgarian, and Serbian medical doctrines.

Conclusion: A Call for High-Fidelity Leadership

The Air Force of 2026 cannot afford “Snapshot Leadership.” We are operating in a Great Power Competition that demands intellectual diversity. If we penalize our thinkers, our writers, and our community leaders because they don’t fit into a pre-formatted box, we will suffer from organizational stagnation.

To my fellow “distracted” leaders: do not let the snapshot define your reality. Your value is not contained within the margins of a PDF. Keep building, keep researching, and keep pushing the boundaries of your craft. The mission doesn’t just need your compliance; it needs your passion.

References

• Danziger, S., Levav, J., & Avnaim-Pesso, L. (2011). Extraneous factors in judicial decisions. Proceedings of the National Academy of Sciences, 108(17), 6889–6892. https://doi.org/10.1073/pnas.1018033108

• D’Este, C. (1995). Patton: A Genius for War. HarperCollins.

• Kanigel, R. (1991). The Man Who Knew Infinity: A Life of the Genius Ramanujan. Scribner.

• Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux.

• Ross, L. (1977). The Intuitive Psychologist and His Shortcomings: Distortions in the Attribution Process. Advances in Experimental Social Psychology, 10, 173–220.

• Thorndike, E. L. (1920). A constant error in psychological ratings. Journal of Applied Psychology, 4(1), 25–29.

• Tversky, A., & Kahneman, D. (1973). Availability: A heuristic for judging frequency and probability. Cognitive Psychology, 5(2), 207–232.

Leave a comment